AI-RAN is becoming one of the most discussed topics in the telecom industry. Some people see it as the next stage of wireless network evolution, while others believe it may be overhyped before the business model, cost structure, and technical architecture are fully verified. To understand AI-RAN clearly, it is necessary to look beyond the marketing term and examine how radio access networks, AI computing, chip architecture, edge computing, and 6G strategy are coming together.

AI-RAN stands for Artificial Intelligence Radio Access Network. In simple terms, it means applying AI technologies to the radio access network, or building a wireless access network that can process communication workloads and AI workloads in a more integrated way. It is not only about putting AI software into a base station. The deeper concept is to transform the base station from a single-purpose communication node into an intelligent edge computing node.

This article explains AI-RAN from a solution and engineering perspective. It covers the evolution from traditional RAN to Open RAN, the role of CPU, GPU, FPGA, and ASIC chips, the meaning of AI for RAN, AI and RAN, and AI on RAN, the industry progress led by companies such as NVIDIA, SoftBank, Nokia, Ericsson, Huawei, and ZTE, and the major deployment challenges operators must consider.

Understanding RAN Before Understanding AI-RAN

RAN means Radio Access Network. In a mobile communication network, there are three major parts: the core network, the transport network, and the radio access network. RAN is the first network layer that connects user devices such as smartphones, industrial terminals, sensors, vehicles, and IoT devices to the operator network.

In the 4G era, a base station was commonly built from antennas, remote radio units called RRU, baseband units called BBU, and the links between them. The antenna and radio unit handled wireless signal transmission and reception, while the BBU handled baseband processing tasks such as modulation, demodulation, encoding, decoding, channel estimation, and resource scheduling.

In the 5G era, the architecture changed. The antenna and RRU became more integrated and evolved into AAU, or Active Antenna Unit. At the same time, BBU functions were separated into CU and DU. CU, or Centralized Unit, mainly handles non-real-time functions. DU, or Distributed Unit, mainly handles real-time baseband processing. This split made the network more flexible, but it also made the architecture more complex.

Why RAN Is Difficult to Open and Virtualize

The core network was easier to virtualize because many of its tasks are related to routing, switching, session control, and service management. This led to the rise of NFV, or Network Functions Virtualization. RAN is much harder. Baseband processing has strict requirements for latency, computing density, timing accuracy, and real-time performance.

Traditional base stations were usually closed systems built by telecom equipment vendors. They used customized ASIC chips and proprietary software. This “black box” model was efficient because ASIC chips are designed for fixed workloads. For RAN baseband processing, ASICs can offer high computing density, low power consumption, and stable latency.

Operators later pushed the industry toward more open and white-box architectures. The goal was to decouple hardware and software, standardize interfaces, and allow general-purpose servers and chips to support telecom workloads. This direction created C-RAN, O-RAN, vRAN, xRAN, and Open RAN.

From C-RAN to Open RAN

In the 4G era, China Mobile promoted C-RAN, or Centralized RAN. The idea was to move multiple distributed BBUs into a centralized equipment room and build a baseband pool. The pool would process baseband workloads centrally and distribute signals to remote radio units through fiber links.

Open RAN went further. Its key idea is modular architecture and standardized interfaces. RU, DU, and CU can come from different vendors if the interfaces are compatible. Baseband software can also be separated from dedicated chips and run on general-purpose platforms such as x86 or ARM servers.

However, Open RAN also exposed a major engineering problem. General-purpose CPU platforms are flexible, but they may consume more power, provide lower efficiency, and show less stable latency compared with dedicated ASIC-based systems. This is why many Open RAN deployments have faced difficulties in large-scale commercial operation. RAN is not only a software problem. It is also a real-time computing and energy-efficiency problem.

Why GPU Enters the RAN Discussion

The logic of AI-RAN begins with chip architecture. In telecom and computing systems, the main logic computing chips include CPU, GPU, FPGA, and ASIC. CPU represents general-purpose computing and is often associated with Open RAN-style architecture. ASIC represents specialized telecom equipment. FPGA provides flexibility and is often used in prototyping or smaller-scale specialized deployments. GPU is the key new force behind AI-RAN.

NVIDIA’s AI-RAN strategy is based on bringing GPU computing into base station systems. The goal is not only to use GPUs for baseband processing, but also to run AI models close to the network edge. If a base station can process both RAN workloads and AI workloads, it may become a new type of edge AI infrastructure.

This matters because telecom equipment procurement is a very large market, with annual global telecom equipment spending exceeding 100 billion USD. If GPUs become part of base station architecture, the telecom network could become a major new computing market.

AI-RAN Is More Than a GPU Base Station

The most important idea behind AI-RAN is not simply “installing GPUs in base stations.” The more strategic idea is to make the base station a low-latency AI edge server with 5G and future 6G connectivity. In this model, the base station does two things at the same time: it processes wireless signals and it runs AI inference for nearby users, devices, vehicles, cameras, robots, and industrial systems.

AI can also improve the RAN itself. With AI algorithms and GPU acceleration, the network may perform more intelligent channel state prediction, dynamic multi-user interference identification, millimeter-wave beam optimization, traffic forecasting, energy-saving control, and radio resource scheduling. These capabilities could improve network performance and reduce operational complexity.

From the perspective of edge computing, AI-RAN sits between cloud and device. Cloud data centers provide the strongest computing power but are far from users and can introduce higher latency. Devices such as smartphones and IoT terminals are close to users but have limited computing power. Base stations are in the middle. They are closer than the cloud and stronger than most terminals, making them a natural location for low-latency edge AI.

The Three Technical Directions of AI-RAN

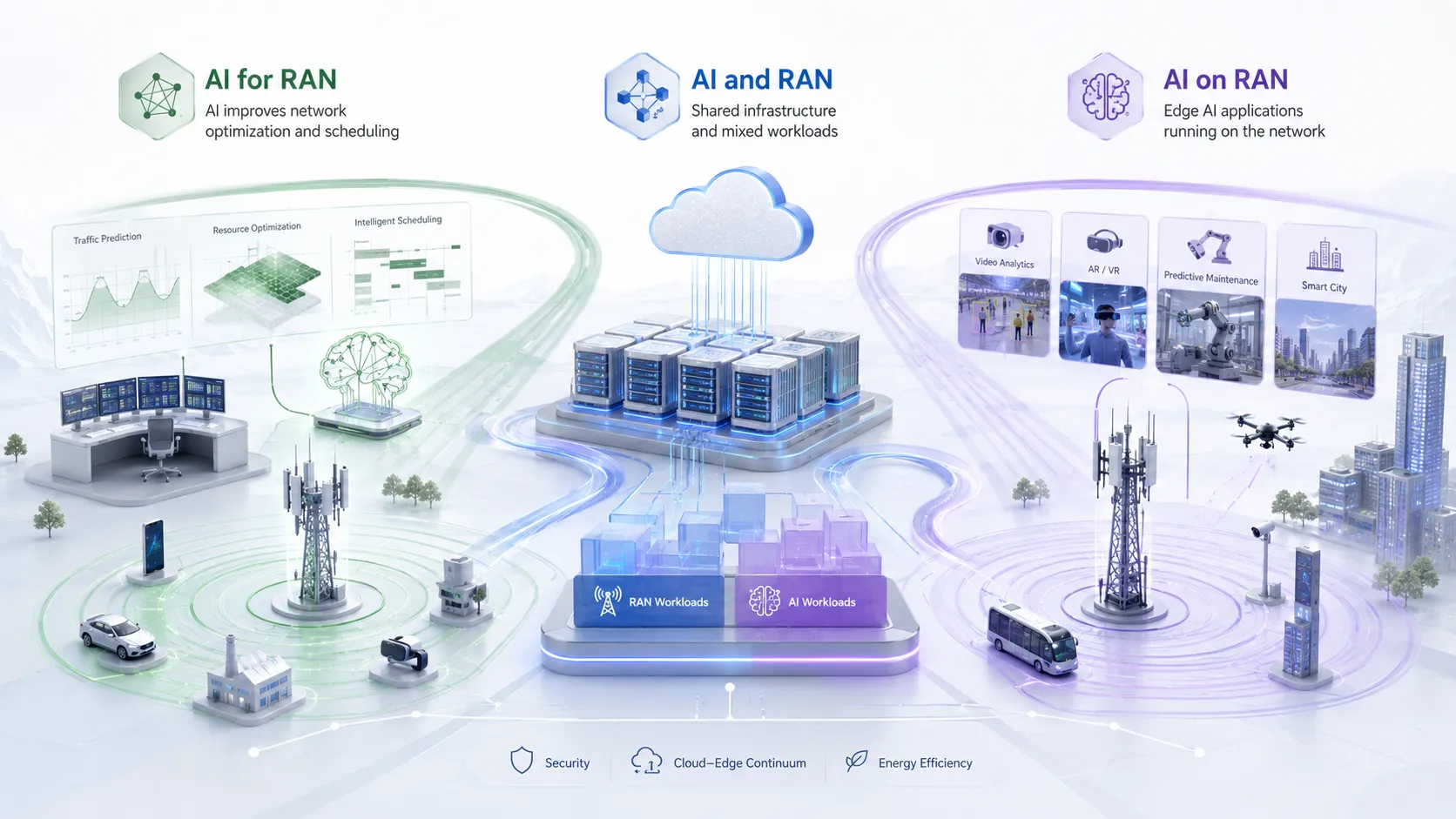

The AI-RAN Alliance divides AI-RAN research into three major directions: AI for RAN, AI and RAN, and AI on RAN. These three directions are different, but they are connected.

AI for RAN

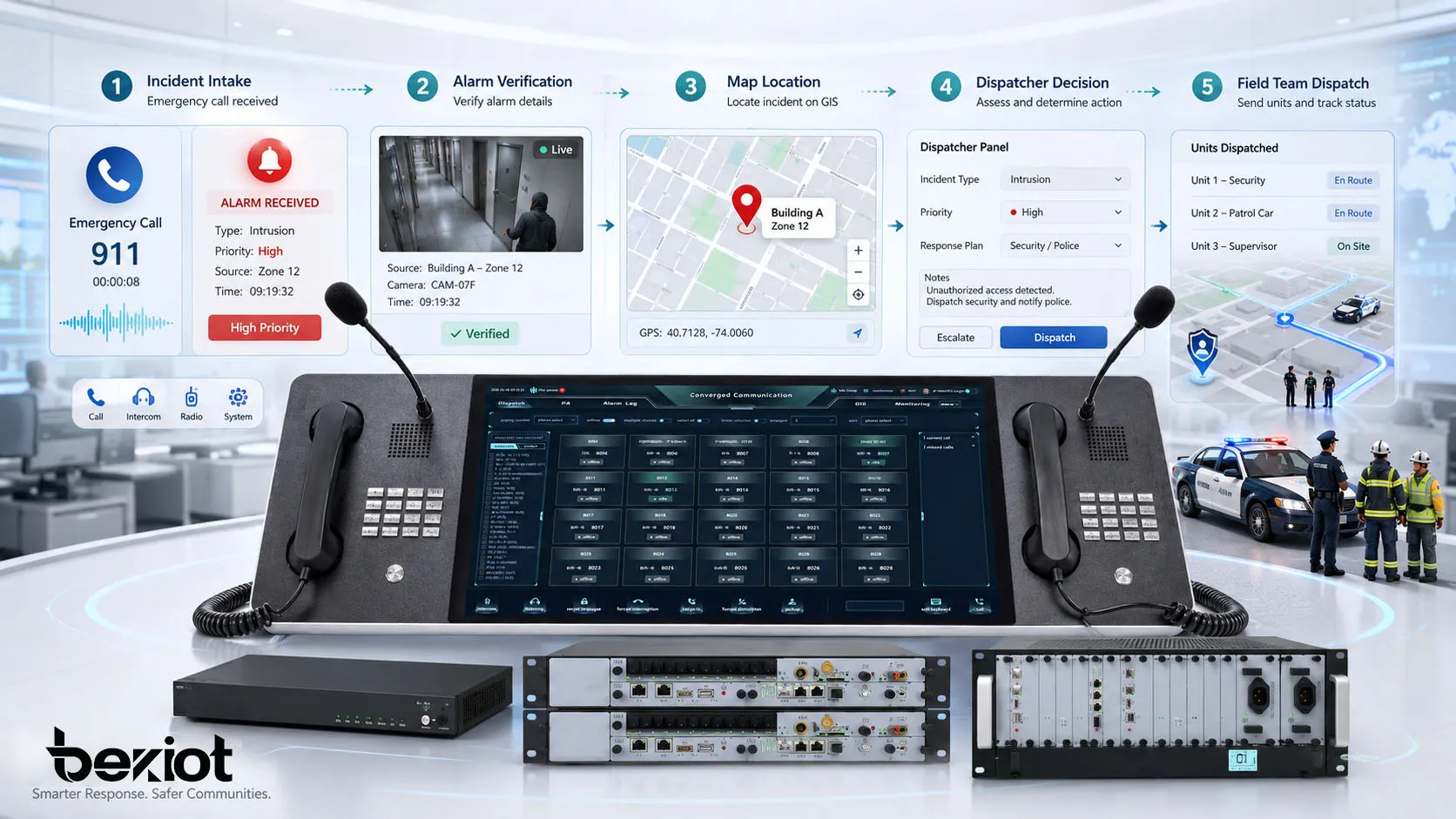

AI for RAN means using AI to improve the radio access network. The goal is to make the network more efficient, more intelligent, and easier to operate. Typical use cases include traffic prediction, intelligent scheduling, energy-saving optimization, fault detection, interference management, and beamforming optimization.

This is the most direct and practical direction because it improves the telecom network itself. Operators care about this because it may improve network performance and reduce operating cost.

AI and RAN

AI and RAN means running communication functions and AI functions on the same infrastructure. In this model, RAN workloads and AI workloads share computing resources. The technical challenge is how to isolate, schedule, prioritize, and balance both types of workloads without affecting real-time communication performance.

This direction is important because it decides whether AI-RAN can become a cost-effective platform. If the same hardware can support both network communication and AI services, operators may gain better resource utilization.

AI on RAN

AI on RAN means using the RAN infrastructure to support external AI applications. This is the most imaginative direction. The base station is no longer only a communication node. It becomes a programmable edge intelligence node that can support video analytics, industrial positioning, autonomous systems, smart city applications, connected vehicles, AR/VR, and low-latency AI services.

This is where AI-RAN connects most closely with 6G. Future networks may not only transmit data. They may also sense, compute, analyze, and coordinate intelligent services at the edge.

Industry Progress of AI-RAN

AI-RAN moved from concept to industry action very quickly. In February 2024, at Mobile World Congress in Barcelona, NVIDIA, SoftBank, Ericsson, Nokia, Microsoft, and other founding members launched the AI-RAN Alliance. The alliance started with 11 founding members and soon expanded to more than 100 operators, vendors, and ecosystem partners.

In November 2024, NVIDIA and SoftBank announced a trial of what they described as the world’s first AI-RAN network capable of processing both AI and 5G workloads. In 2025, NVIDIA invested 1 billion USD in Nokia, becoming one of its largest shareholders and strengthening cooperation in 6G RAN and AI-RAN solutions.

NVIDIA also continued building a full-stack AI-RAN solution. In 2025, it introduced Aerial RAN Computer Pro, also called ARC-Pro, and the AI Aerial software platform. The solution integrates technologies such as GB200, BlueField-3, Spectrum-X networking, and CUDA-X libraries for telecom-grade use cases.

Later, NVIDIA and partners promoted the “All-American AI-RAN” technology stack, designed to dynamically allocate computing resources within or across GPUs for vRAN deployment, AI applications, and 6G research. In March 2026, NVIDIA introduced the AI Grid vision at GTC. In that vision, AI-RAN acts as a key edge network and compute layer, while AI Grid provides distributed cloud and orchestration capability.

Two Industry Paths: Join or Build Independently

The telecom industry is forming two broad responses to AI-RAN. The first path is to fully embrace the GPU-based AI-RAN architecture. Operators such as SoftBank and AT&T are interested in network intelligence, operational cost reduction, new service creation, and early positioning for the 6G era. Some industry estimates suggest that AI-RAN-style automation and intelligence may reduce OPEX by more than 30% in certain scenarios.

Nokia is one of the most visible equipment vendors in this path. Its anyRAN software can integrate with NVIDIA GPU AI RAN platforms. In March 2026, Nokia announced AI-RAN function tests with operators including T-Mobile US, Telkom Indonesia, and SoftBank.

The second path is independent exploration. Many equipment vendors and operators agree that AI will change communication architecture, but they do not want to be deeply locked into one GPU vendor ecosystem. Ericsson, for example, has tested RAN software on NVIDIA AI platforms, while also integrating a programmable neural network accelerator into its own Ericsson Silicon chips and pushing AI inference closer to AAU and RRU radio-side equipment.

Huawei and ZTE are also exploring their own AI and telecom convergence routes. Huawei has proposed an AI-Centric Network, while ZTE has introduced AIR MAX. These strategies show that AI-RAN is not one single vendor’s solution. It is becoming a broader industry direction with multiple technical paths.

Why Operators Are Interested and Concerned

Operators are interested in AI-RAN because it may help them escape the “dumb pipe” problem. Traditional operators often sell connectivity, but the value of connectivity alone is under pressure. If base stations can become programmable AI edge service nodes, operators may create new business models based on low-latency AI inference, industry applications, data exposure, private networks, and edge computing services.

Some operators also talk about moving from traffic operation to “token operation” or from communication service providers to computing service providers. AI-RAN fits this strategic goal because it combines radio connectivity and edge AI computing.

However, operators are also concerned. If baseband processing and AI inference become deeply tied to one GPU platform, control over the RAN architecture may shift toward that vendor ecosystem. This creates worries about vendor lock-in, technology sovereignty, supply chain dependency, cost transparency, and long-term bargaining power.

Deployment Challenges of AI-RAN

AI-RAN faces several practical challenges before large-scale deployment can become common. The first is cost. CAPEX can be high because AI accelerators, servers, networking equipment, and site upgrades are expensive. OPEX can also be high because GPU-based systems may consume significant electricity and require new cooling, maintenance, and operational processes.

The second challenge is business model design. Operators must answer a difficult question: how can edge AI computing be measured, priced, sold, and operated? Should AI-RAN capacity be sold like cloud computing resources, telecom network slices, industry services, or a completely new category of service?

The third challenge is standardization. Telecom standards are usually driven by 3GPP, while AI-RAN is strongly promoted by the AI-RAN Alliance and related ecosystem players. There is still no fully unified industry framework for data semantics, model interfaces, service orchestration, workload scheduling, and commercial responsibility.

The fourth challenge is ecosystem maturity. Chip vendors, telecom equipment vendors, operators, cloud providers, terminal vendors, application developers, and AI model providers all need to decide which technical route to support. At this stage, many companies are still evaluating whether to invest deeply and how to avoid betting on the wrong architecture.

Why Heterogeneous Computing May Be the Real Answer

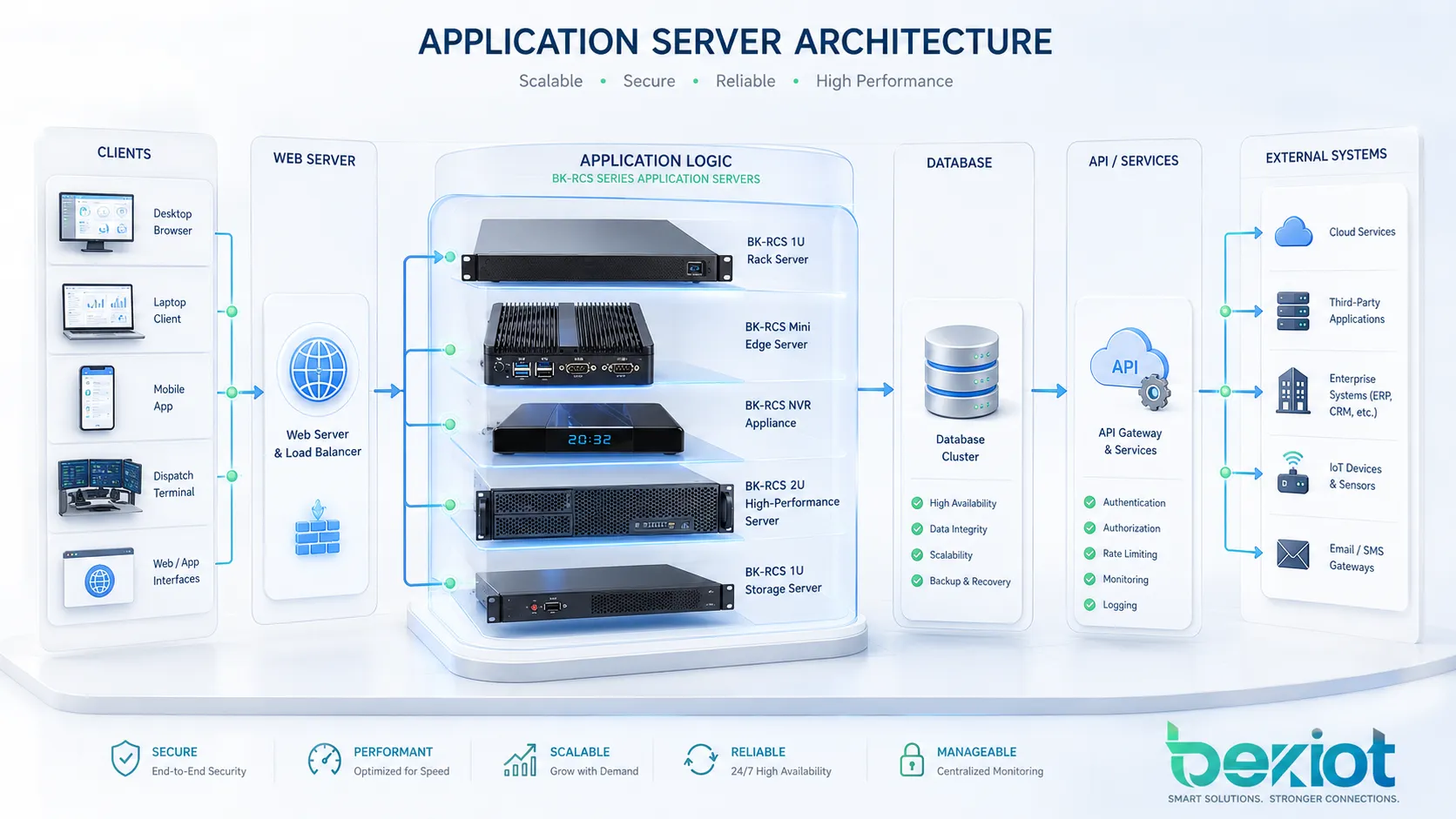

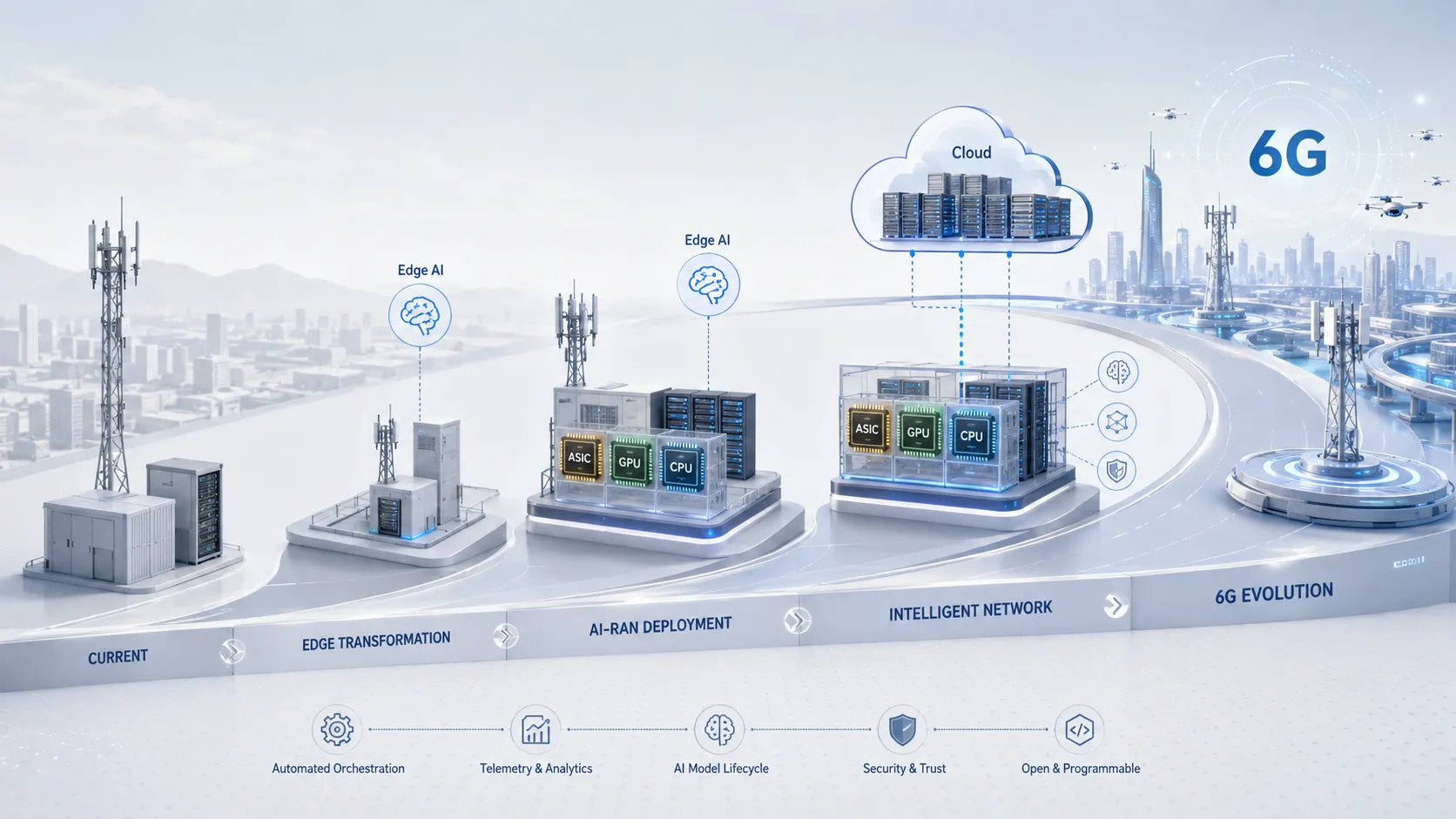

AI-RAN is likely to develop, but the final computing architecture may not be a pure GPU architecture. A more realistic direction is heterogeneous computing, combining ASIC + GPU + CPU, and in some scenarios FPGA. Each chip type has its own role.

ASICs are efficient for fixed telecom workloads. CPUs provide flexible general-purpose control and service processing. GPUs are strong for parallel AI computing and some accelerated RAN workloads. FPGAs can support programmable acceleration in specialized scenarios. Operators may mix different compute resources based on performance, efficiency, cost, deployment scale, and ecosystem requirements.

This hybrid architecture may help operators avoid overdependence on a single technology path. It also allows AI-RAN to be deployed gradually, starting from selected use cases such as network optimization, edge video analytics, industrial positioning, private networks, and 6G research platforms.

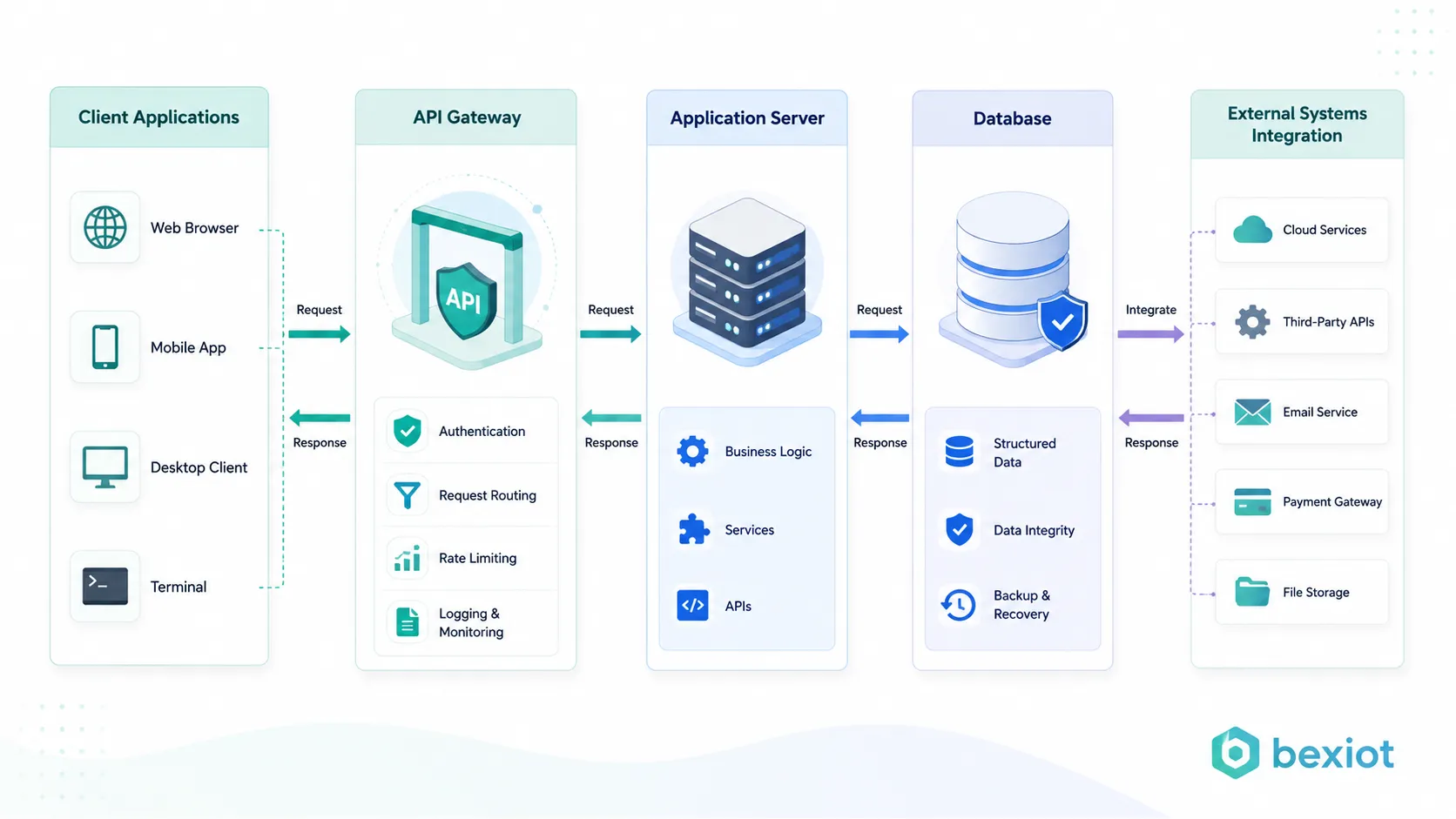

Recommended AI-RAN Solution Architecture

A practical AI-RAN solution should be designed as a layered architecture rather than a simple hardware upgrade. The radio layer includes RU, AAU, DU, and CU functions. The compute layer includes ASIC, CPU, GPU, and possibly FPGA resources. The AI layer includes model runtime, inference engine, data processing, and optimization algorithms. The orchestration layer manages workload scheduling, service exposure, monitoring, and lifecycle management.

In this architecture, telecom workloads must always keep deterministic performance. AI workloads can be scheduled based on priority, available capacity, and latency requirements. For example, real-time RAN processing should have higher priority than non-critical AI inference. Industrial applications such as video analytics, positioning, and low-latency control should be assigned according to business SLA.

Operators should also design an AI-RAN security and governance layer. This includes data privacy, model security, access control, audit logs, service isolation, and fault recovery. Since AI-RAN connects network infrastructure with AI applications, security requirements may become more complex than traditional base station deployment.

AI-RAN Use Cases

Network Optimization

AI can predict traffic patterns, identify interference, optimize radio resource scheduling, improve energy saving, and support automatic network tuning. This belongs mainly to AI for RAN.

Edge Video Analytics

A base station with edge AI computing can process nearby video streams for public safety, industrial monitoring, traffic management, and smart campus applications. This reduces the need to send all data back to a central cloud.

Industrial Private Networks

In factories, ports, mines, energy sites, and logistics parks, AI-RAN can combine private 5G connectivity with local AI inference. This can support machine vision, robot control, worker safety, equipment inspection, and low-latency production monitoring.

6G Research and AI-Native Networks

AI-RAN may become an important foundation for 6G. Future networks may integrate communication, sensing, computing, and intelligence. AI-RAN provides a possible path toward this AI-native network architecture.

Conclusion

AI-RAN is one of the most important technology directions in the telecom industry. It connects RAN evolution, AI computing, edge infrastructure, Open RAN, GPU acceleration, and 6G strategy. Its goal is not only to improve base station performance, but also to turn the radio access network into an intelligent edge computing platform.

However, AI-RAN is still at an early stage. The industry has made rapid progress since the AI-RAN Alliance was launched in 2024, but large-scale commercial success is not guaranteed yet. High CAPEX, high OPEX, power consumption, vendor lock-in, uncertain business models, standardization gaps, and immature ecosystems remain major challenges.

The most likely future is not a simple replacement of traditional RAN by GPU-based AI-RAN. A more realistic path is heterogeneous computing, phased deployment, open interfaces, business-model validation, and 6G-oriented evolution. AI-RAN may become a core technology of the next telecom revolution, but it still needs time, real-world deployment, and commercial proof.

FAQ

What does AI-RAN mean?

AI-RAN means Artificial Intelligence Radio Access Network. It refers to applying AI technologies to the radio access network and integrating wireless communication workloads with AI computing workloads.

Is AI-RAN only about putting GPUs into base stations?

No. GPUs are an important part of current AI-RAN discussion, but AI-RAN is broader. It includes AI-based network optimization, shared AI and RAN computing infrastructure, and using RAN as an edge AI service platform.

What are AI for RAN, AI and RAN, and AI on RAN?

AI for RAN uses AI to improve network performance and operation. AI and RAN runs AI and communication workloads on shared infrastructure. AI on RAN uses the RAN network as an edge platform for AI applications.

Why is AI-RAN important for 6G?

6G is expected to integrate communication, sensing, computing, and intelligence. AI-RAN may provide the edge computing and AI-native network foundation needed for this evolution.

What are the biggest challenges of AI-RAN?

The main challenges include high CAPEX, high OPEX, power consumption, vendor lock-in risk, uncertain business models, lack of unified standards, and immature ecosystem support.

What computing architecture is most likely for AI-RAN?

A heterogeneous architecture is likely. Operators may combine ASIC, GPU, CPU, and sometimes FPGA resources to balance performance, efficiency, cost, flexibility, and ecosystem control.